The term display resolution is quite ambiguous for many.

Many of us have seen brands flaunting numbers such as 1080p, 720p and 4K, but in reality, what are they?

Obviously, we all understand more the resolution, better the clarity of the visual output.

How so?

Clueless right?

That’s why we are here with this article detailing some of the obscure facts behind display resolutions and their end effects on the picture quality delivered to you by the TV, smartphone or any other gadget with a display.

Display Resolution

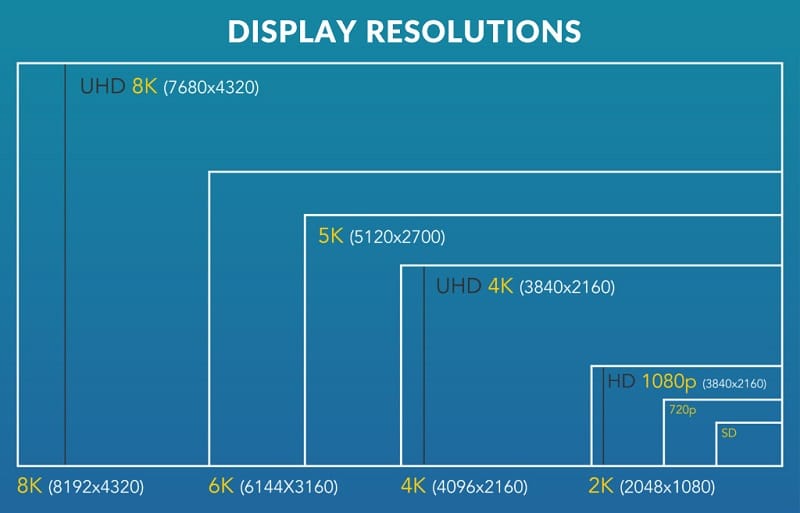

Display resolution is defined as the number of distinct pixels present in each dimension that can be displayed on a screen.

In simple terms, it can be understood as the number of pixels which a monitor or a screen displays along the horizontal and vertical axis.

Resolution is usually quoted in a width X height format, with pixels as the unit of measurement.

For eg, you might have seen the brands advertising their displays as 1920 x 1080 pixels, in which 1920 indicates the number of individual pixels width wise, and 1080 is the number of pixels height wise on the screen.

Display Resolution and Visual Quality

Display resolution is not the only factor which decides the output image quality of a display.

In fact, it’s one of the factors that determine visual quality apart from the resolution of the content, the frame rate at which the content is recorded and the capability of the decoding software.

The base unit of clarity in a visual is the pixel. Images are made up of tiny pixels and the larger the number of pixels, the more detailed will be the end result.

In this regard, a Full HD display will have more number of pixels than a regular display, consequently producing better clarity visuals.

However, there are certain other terms that one has to keep in mind regarding the resolution and its resulting visual quality, some of which are to be discussed down below.

The p and i notation

Whenever a brand advertises the resolution of the display on their products, you might have noticed the letter p or i superseding the resolution count.

For eg., the 1080p and 1080i has a night and day difference when it comes to the picture quality.

The p stands for progressive scanning and i stands for interlaced scanning.

In progressive scanning, the horizontal lines or pixels are scanned sequentially and much faster. This reduces the screen flicker and delivers a pleasant and superior visual output.

When it comes to the interlaced scanning technology, the odd lines on the display are scanned first, followed by the even lines.

This increases the screen flicker and might induce strain to your eyes. It also considerably degrades the final output quality.

The interlaced resolution displays employ an outdated technology and come at significantly cheaper rates.

You should keep in mind that while buying a TV, the type of scanning done by the TV matters to you as much as the display resolution.

Interpolation

Can you watch a Full HD video on a 4K television?

Yes, you can.

Most of the televisions entering the markets these days have this capability of live interpolation of video signals to match its native resolution.

While streaming a Full HD video at 1920 x 1080 pixels resolution, these televisions upscale the content to match the 4K resolution on the fly.

Downsampling

Reading about interpolation will make you wonder about the technology in reverse.

Can you watch a Full HD video on a 720p display?

The answer to this question is also yes but in a different manner of course.

A 720p display will always downsample the Full HD video to fit its native resolution.

This means that the 1080p video will play on a non-Full HD device, but with decreased quality, which will be quite unnoticeable for most of the users.

Pixel Density

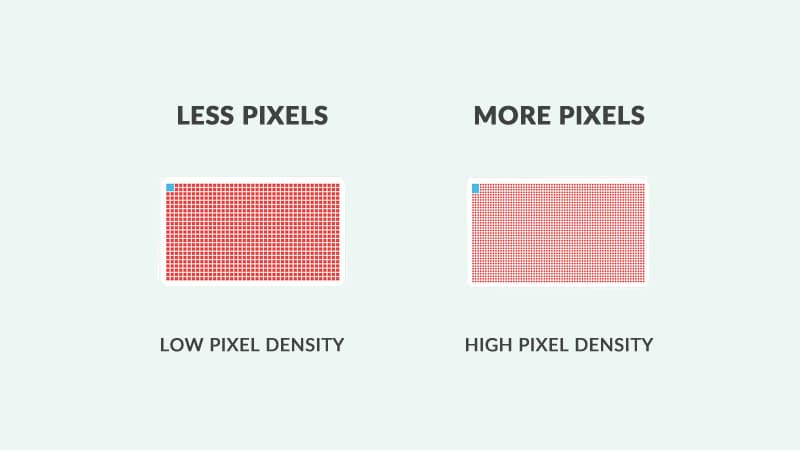

A higher resolution display doesn’t always guarantee a better-looking image.

If the size of the display is very large and the resolution is Full HD, the pictures displayed won’t be as vivid and clear as that of a smaller display with the same resolution.

That’s where the term pixel density comes in to play.

Pixel density is the number of pixels concentrated per unit area on the screen.

It’s usually calculated by a simple math involving the size and the resolution of the display.

More the pixel density, more detailed will be the image and visual clarity. This is the simplest point that you can keep in mind while choosing a gadget.

Commonly used resolutions in gadgets

Different gadgets come with different resolution display, depending on a range of factors such as the application of use, size of the gadget, price etc.

In this section, we discuss the most commonly used resolutions in gadgets around us.

- 720p HD

- 720p HD+

- 1080p Full HD

- 2K Quad HD

- 4K Ultra HD

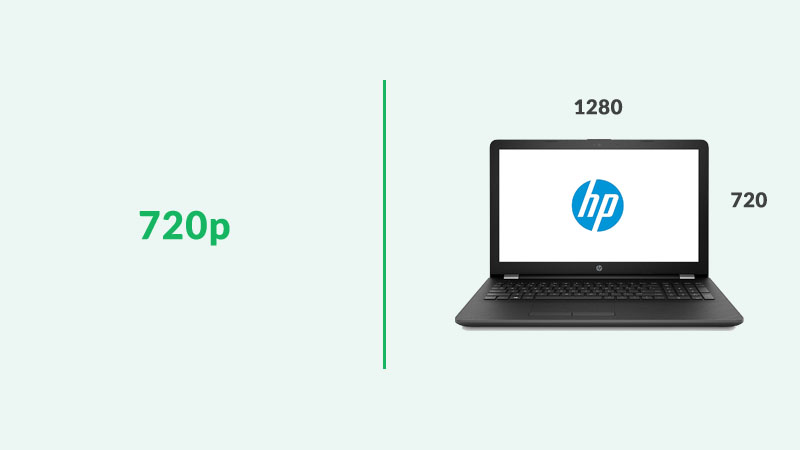

1. 720p HD

The 720p HD is a popular display resolution format followed by manufacturers, widely used for television sets and smartphone displays.

The 720 stands for the number of horizontal scan lines with pixels lined up against each other. The resolution of 720p HD is 720 x 1280 pixels.

720p TVs were available in the Indian market but they are now slowly phased out and replaced by Full HD panels, owing to the reduction in price of Full HD panels.

Budget smartphones still use 720p panels for cost-cutting measures. However, the difference between a 720p and a 1080p is significantly visible on larger screens.

2. 720p HD+

This resolution is relatively a new entrant to the market which came as a result of the introduction of notches to the smartphone display.

Seen with budget smartphones, the speciality of 720p HD+ apart from the notches is their 19:9 aspect ratio.

These HD+ displays usually have a resolution of 720 x 1440 pixels. Since the television and cinema standards have been pegged to 16:9 aspect ratio, these HD+ displays are only available in smartphones as of now.

3. Full HD (1080p)

Full HD is now the most common resolution in use, considered to be the defacto standard for a pleasant viewing experience.

Even with the advent of 4K technology, Full HD hasn’t lost its sheen and is widely used across gadget platforms ranging from TVs to smartphones.

A Full HD display has a native resolution of 1920 x 1080 pixels.

Full HD contents are available in abundance and the satellite channels broadcast content in HD as well.

Investing in a TV or a smartphone with a Full HD display is certainly beneficial when it comes to the visual quality output.

1080p content on displays with native support for Full HD makes much more sense than any other resolution format out there.

Considering the internet speeds in developing countries such as India, streaming HD content without buffering is one of the biggest hurdles we are facing right now.

With the price of Full HD displays coming down, choosing 720p over a 1080p display is nothing short of a blunder, we’d say.

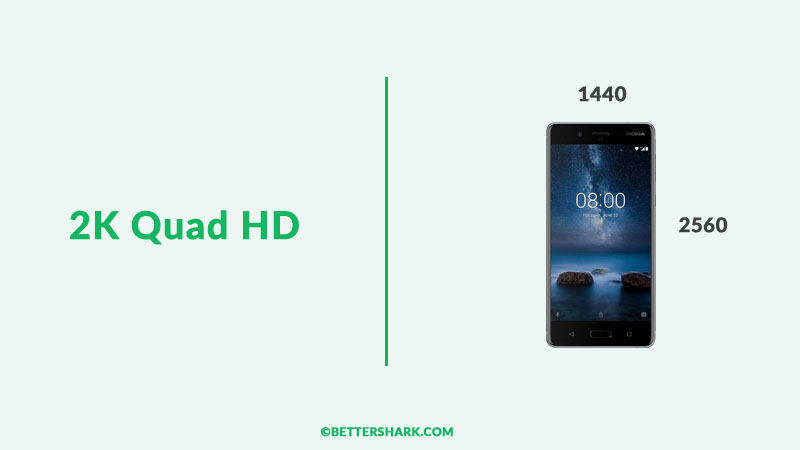

4. 2K Quad HD

Quad HD displays are generally found on expensive smartphones and certain desktop and laptop monitors.

Quad HD displays have a resolution of about 2560 x 1440 pixels. In PC monitors, such displays are often referred to as 2K or WQHD ( Wide Quad HD).

QHD screens are considerably sharper than Full HD displays but come at the expense of more battery consumption.

5. 4K Ultra HD

4K Ultra HD is currently the most talked about resolution standard in the industry.

Providing four times the sharpness of a typical Full HD display, the 4K Ultra resolution rides high on the image quality and sharpness it delivers.

The prices of 4K displays have been coming down for a while.

Affordable 4K televisions can now be purchased from the market for a budget under Rs.30,000. The same is the case with 4K desktop monitors as well.

4K displays come with a resolution of 3840 x 2160 pixels.

With gaming and online streaming gaining massive popularity in the country, 4K is a sure bet to succeed, provided the internet quality improves constantly over the course of time.

With 4K native content widely becoming available, the immersive experience of watching them on a fully compatible display elevates the experience to a whole new level.

HDR

HDR stands for High Dynamic Range.

HDR photos have been a feature for a while, delivering deep blacks and the whitest whites on the photos you click, making them look more realistic.

Now the HDR support has been added to the displays, with a few handfuls of formats such as HDR10, Dolby Vision and HLG, made by competing companies.

So what’s the difference between HDR for photos and HDR for displays?

Well, the HDR for photos is a method of capturing extra information by the camera sensor, while the HDR for videos is a method of displaying the video captured with HDR in a specific way, which normal TV isn’t able to.

We all know about the contrast ratio. It’s the ratio of brightest brights on a display to the darkest darks. In the case of HDR enabled displays, this range will be significantly higher.

A 4K HDR display will reproduce content shot in HDR much more realistically and accurately than a typical 4K display.

Also, HDR content can’t be viewed in its full potential on a normal 4K display without HDR support.

Conclusion

It’s accurate to say that resolution determines the sharpness of a display, but by how much is determined by various other factors including the pixel density and the hardware capability to decode signals.

Display Technology is one field where trends change overnight.

You can’t be future proof when it comes to gadgets but being informed about certain aspects of technology such as display resolution, can make your life much easier.

With this article, we hope you have understood the jargons about display resolution.

We wish you will make use of this information the next time you are about to purchase a gadget.

And when you do that, come back and checkout our site to know the best gadgets under any budget.

Have a great day and see ya later!